15 Actors Who Support Using AI

Artificial intelligence has been moving into film and television in very practical ways. Actors are working with teams that use tools like voice synthesis, face restoration, and de aging to help tell stories that span decades or that bring back a familiar sound. When these tools are used with consent and clear guidelines, they become part of the everyday toolkit alongside cameras, lights, and editing software.

The names below have either used AI assisted techniques in their own projects or have spoken openly about trying the technology in professional contexts. You will see examples such as licensed voice models for legacy characters, machine learning that restores archival footage, and synthetic voices that give performers options after illness or injury. Each entry notes where and how the technology showed up so you can see the specific use case on set or in post.

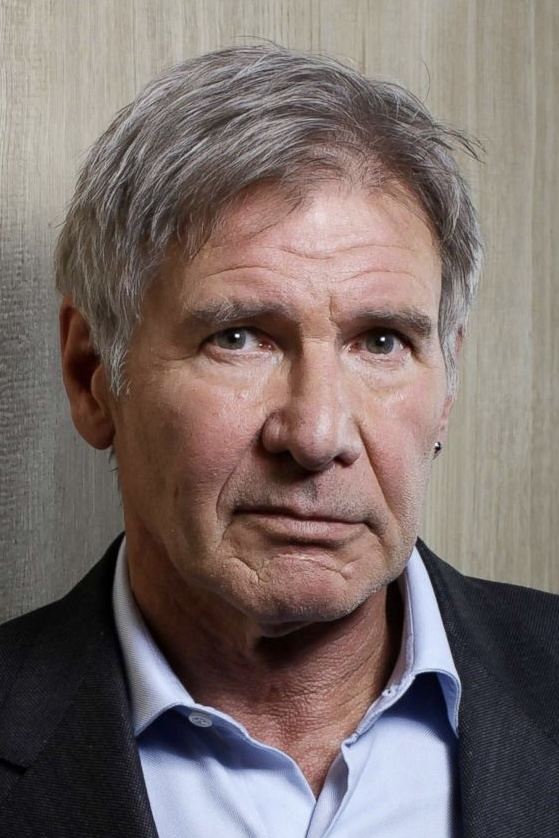

Harrison Ford

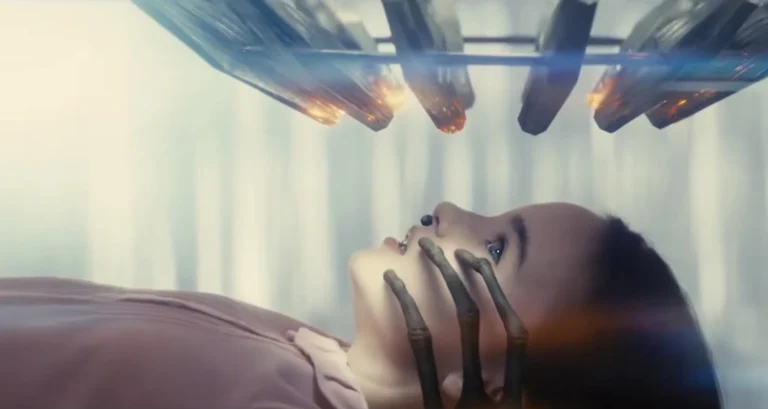

For de aged flashbacks in ‘Indiana Jones and the Dial of Destiny’, the production blended new performance capture with archival material from earlier Lucasfilm projects. The pipeline used machine learning to match skin texture, lighting, and facial motion so a younger face could track precisely to a present day performance. The work relied on studio owned archives and on set reference so the result aligned with Ford’s acting choices.

Industrial Light and Magic integrated the de aged material with practical plates so the character could move through complex action scenes without visible seams. The team trained models on historical footage of Ford from prior films, which helped the system reproduce period accurate details such as hairline and micro expressions while maintaining continuity shot to shot.

Mark Hamill

In ‘The Mandalorian’ and ‘The Book of Boba Fett’, the show used a licensed synthetic voice to portray a younger Luke Skywalker. The system learned from authorized recordings to generate a youthful vocal timbre while Hamill’s performance and direction guided phrasing and line reads. This approach allowed the character to sound as he did in the era the story depicted.

The production paired that voice work with facial restoration on a younger face double. Machine learning tools assisted with lip sync and fine facial details so the dialogue and expressions matched cleanly. The combination let the crew place Luke in new scenes while keeping legal and creative control within the studio and performer agreements.

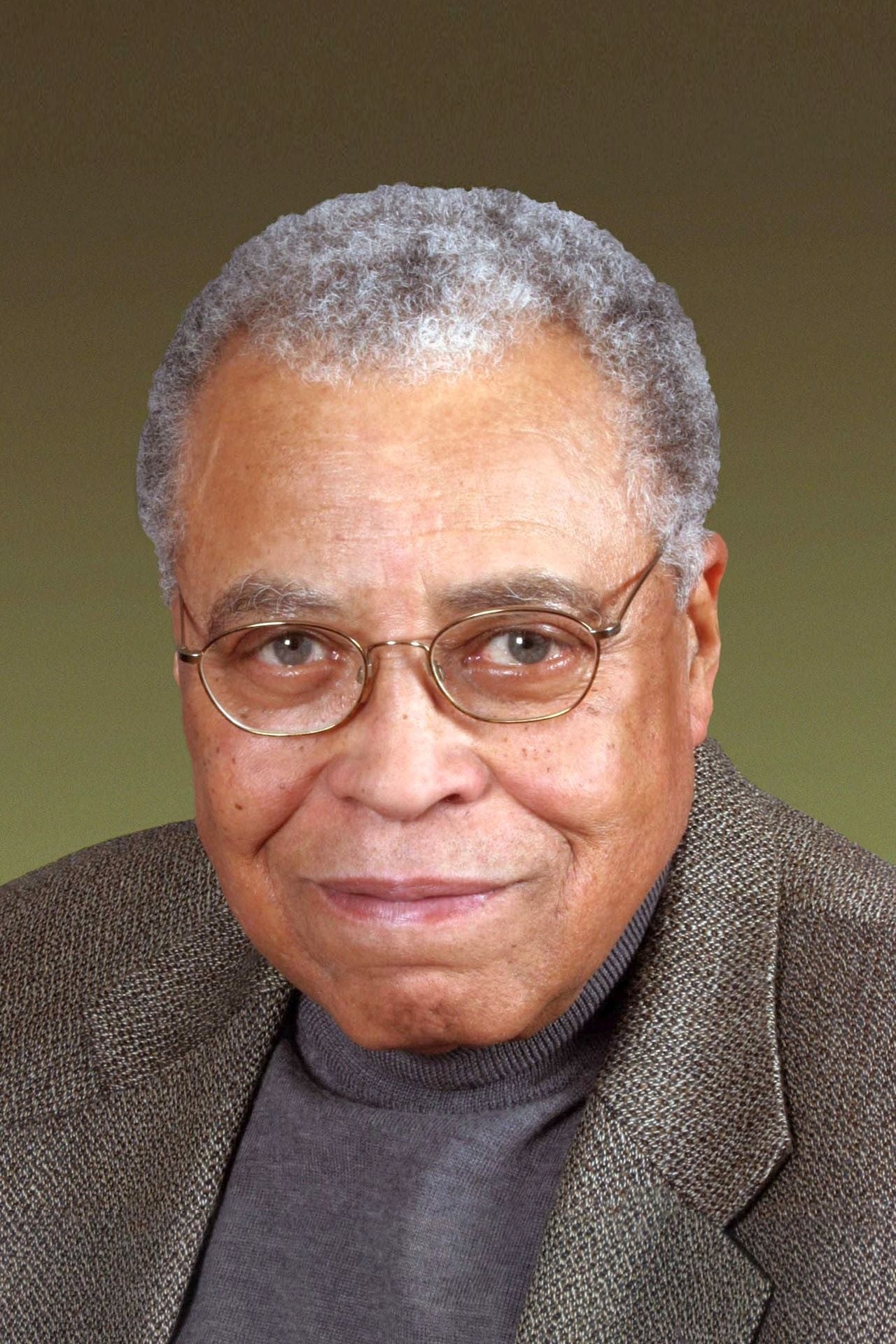

James Earl Jones

For Darth Vader in ‘Obi Wan Kenobi’, Jones authorized the use of a synthetic voice built from approved recordings. The licensed model preserved the depth and resonance associated with the character while allowing precise control over line delivery in the mix. This kept an iconic sound consistent without requiring long voice booth sessions.

The voice system integrated into a standard dialogue pipeline so sound editors could adjust pacing, emphasis, and breath. Because the model was trained on sanctioned material, it produced results that matched decades of performances, which helped the show maintain continuity with earlier films in the same universe.

Val Kilmer

After throat cancer affected his speech, Kilmer worked with a voice synthesis company to build a model of his voice using archival recordings. That tool enabled a brief but important scene in ‘Top Gun: Maverick’ where the character speaks a few lines that match his established sound. The production used the synthesized voice under the guidance of Kilmer and the filmmakers.

The voice model became part of the editorial process, with sound teams shaping phrasing and timing in the mix to support the scene. The workflow gave Kilmer a way to participate vocally in future projects while retaining control over how the tool is used and credited.

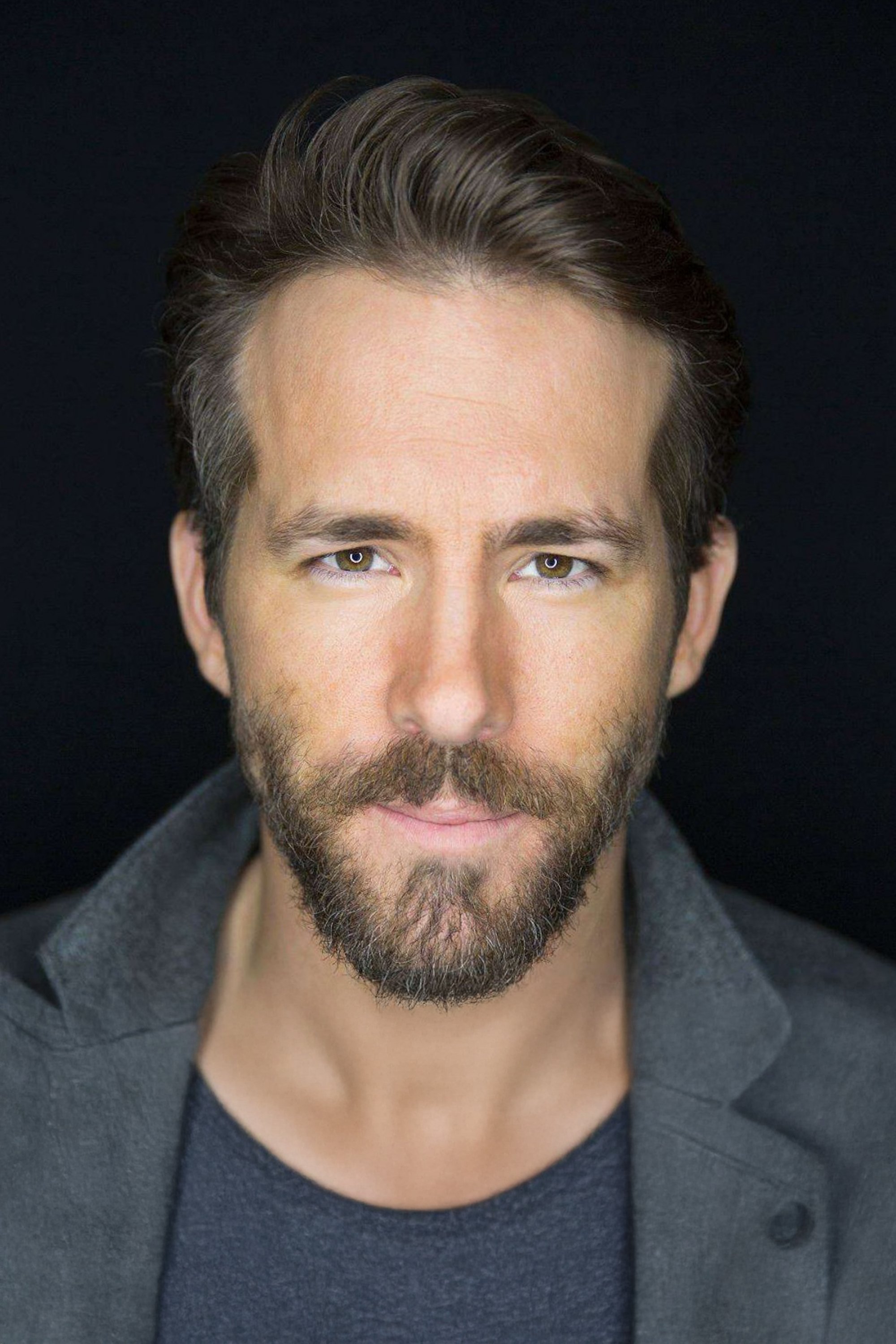

Ryan Reynolds

Reynolds tested generative tools in marketing by having a script draft produced by an AI model for a Mint Mobile spot. He then recorded that copy as written to show how the system handled brand tone and structure. The experiment demonstrated how AI can assist with first pass writing in advertising while final decisions still come from the creative team.

He has also discussed using AI for quick concept lines and early brainstorming around campaigns. In that context the tool works like an assistant that produces options for timing, taglines, and variations, which a human team then selects and refines before production.

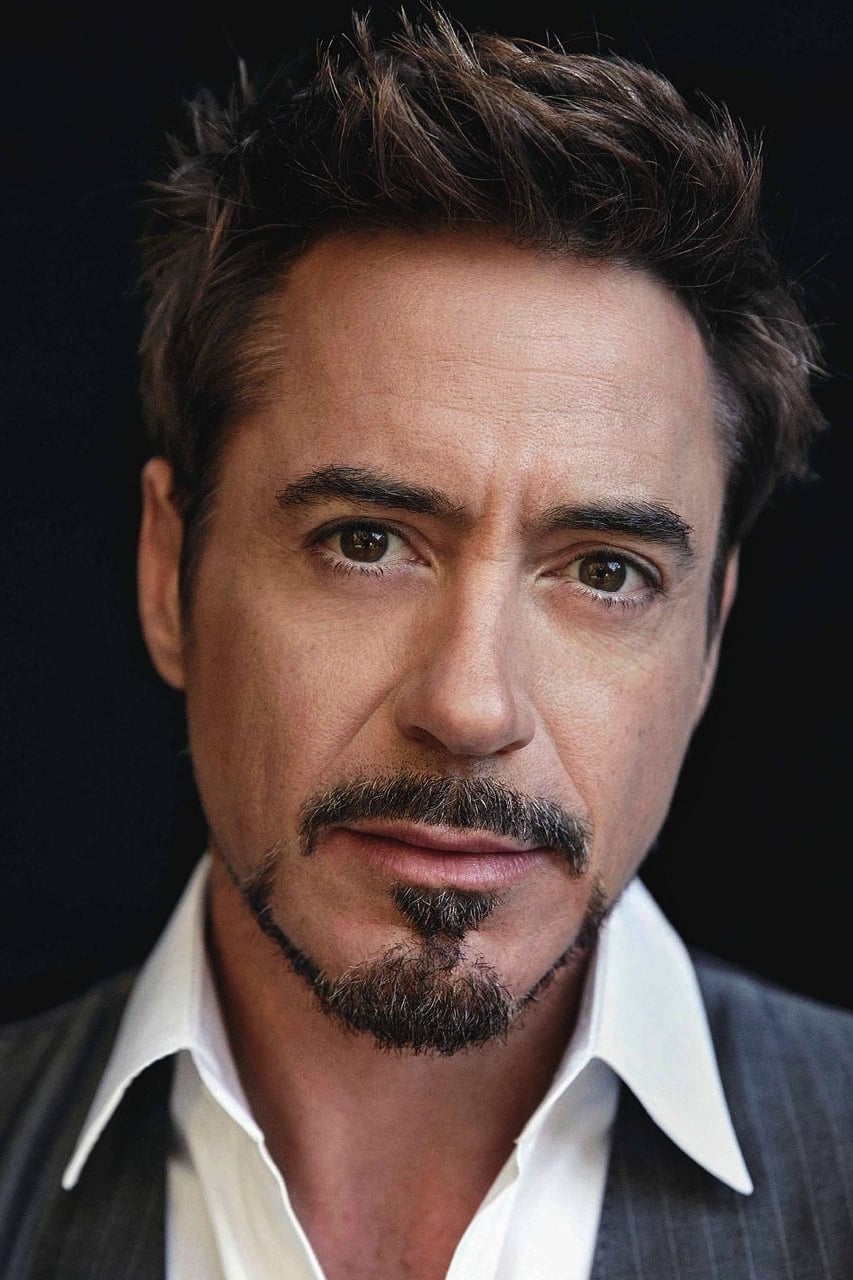

Robert Downey Jr.

Downey hosted the docuseries ‘The Age of A I’, which profiled research labs and startups applying machine learning to fields such as medicine and environmental monitoring. The series walked through real deployments so viewers could see how models are trained, validated, and integrated into devices and workflows. This gave audiences a clear view of practical AI rather than abstract hype.

He also highlights projects that track emissions and optimize energy use with data driven models. By focusing on measurable outcomes, these efforts show how AI can support problem solving in areas that benefit from constant sensing and prediction, which is useful context for future storytelling as well.

William Shatner

Shatner recorded long form interviews with an interactive video platform that uses AI to index responses and serve them conversationally. Viewers can ask questions and receive answers in his own words, which preserves a personal history in a form that feels direct and searchable. The work relied on careful capture sessions to build a robust dataset.

The system functions like a guided archive that can pull related snippets to answer follow ups. This approach turns a single recording period into a resource for museums and fans, and it demonstrates how performers can extend their voice and insights beyond traditional formats while controlling what is recorded and released.

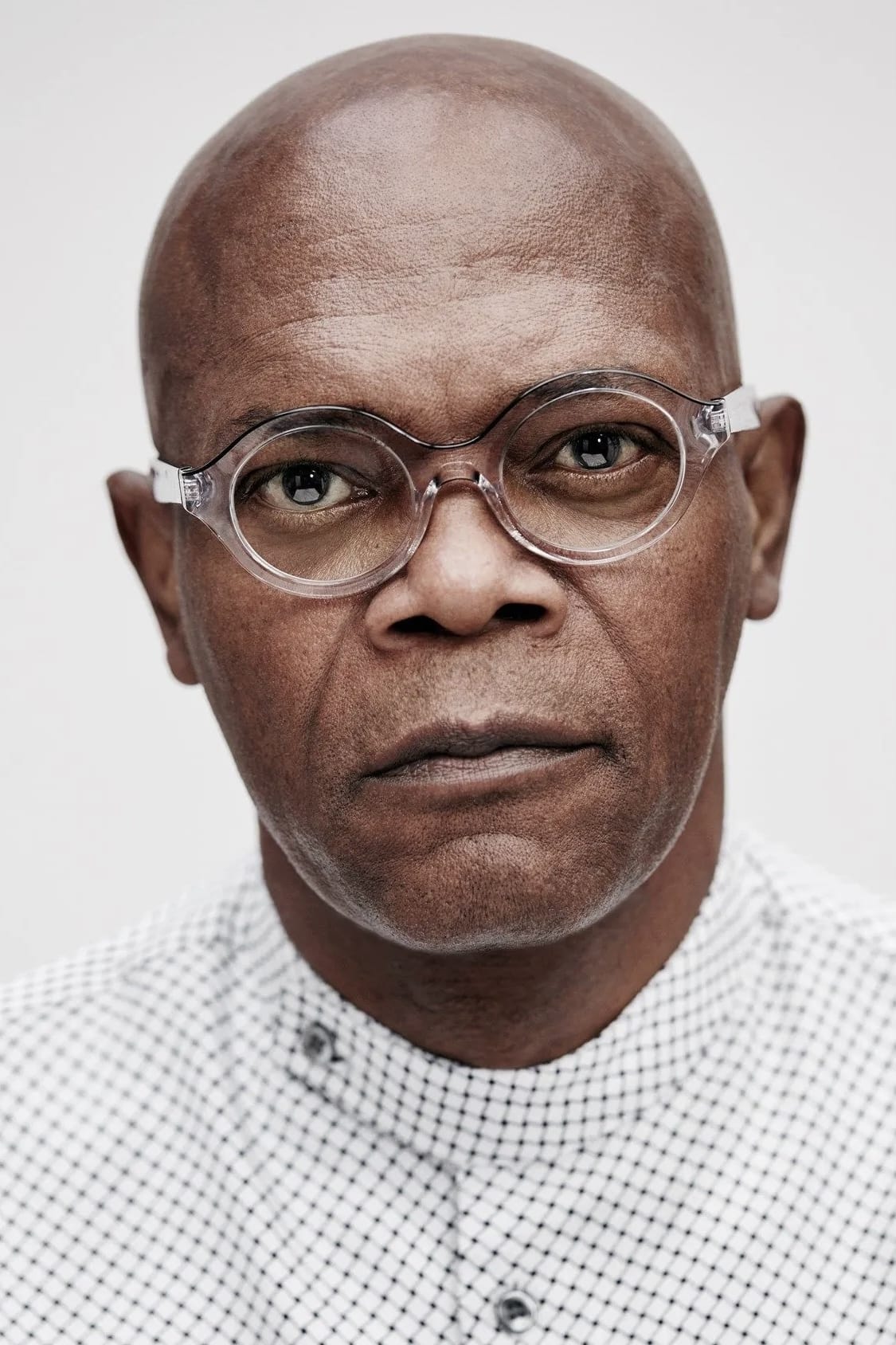

Samuel L. Jackson

Marvel used de aging on Jackson across a full feature in ‘Captain Marvel’, which required frame by frame facial work guided by machine learning. The team tracked skin motion, eye detail, and subtle muscle changes so a younger look could hold up in daylight scenes and close ups. Jackson’s on set performance anchored the process so the character’s timing and attitude stayed intact.

This level of de aging needed consistent training data and extensive quality control passes. Machine learning helped automate parts of the match move and texture restoration, which allowed artists to focus on the hardest shots. The result kept a familiar character active within a story period set decades earlier.

Michael Douglas

Douglas appears as a younger Hank Pym in flashbacks in ‘Ant Man and the Wasp’, where facial restoration tools aided the de aging. The work combined tracked facial geometry with learned texture synthesis to keep skin detail natural under different lighting setups. The intent was to match how the actor looked in earlier decades without compromising present day performance.

Editorial teams coordinated with the VFX vendor to plan shot lengths and angles that supported the effect. With machine learning assisting in cleanup and detail recovery, artists delivered sequences that cut smoothly with contemporary scenes, which made the flashbacks feel like part of a continuous timeline.

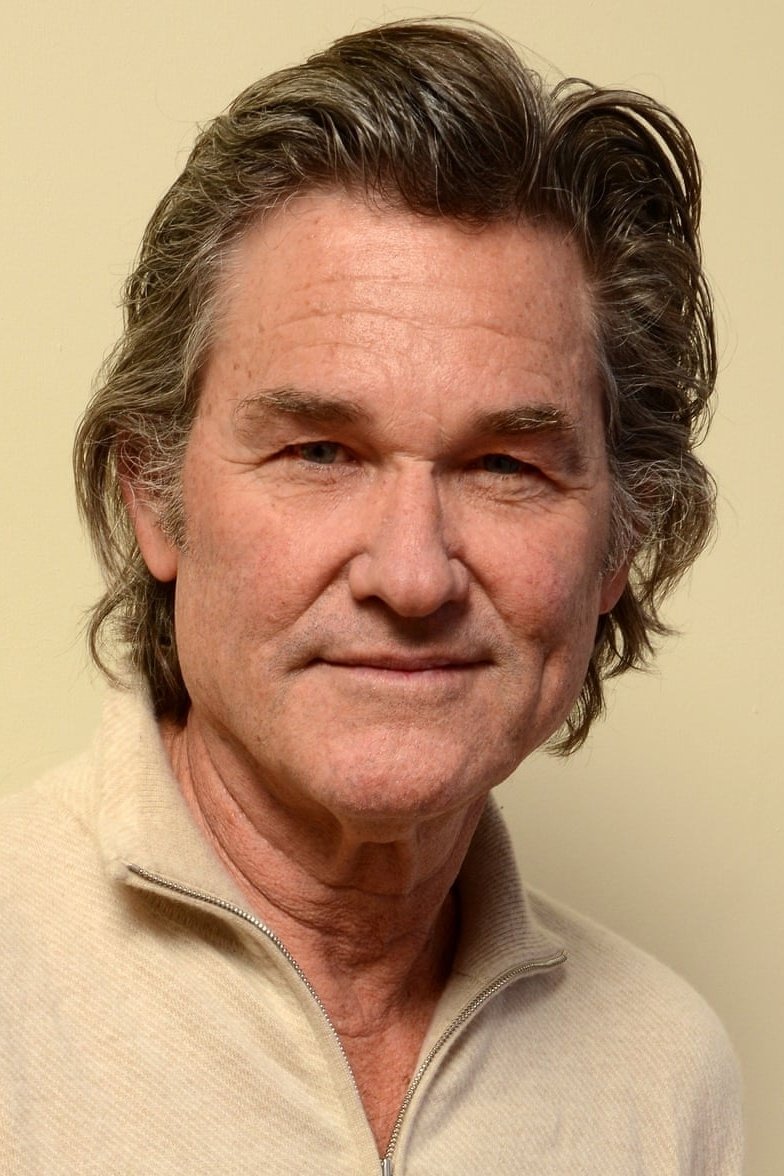

Kurt Russell

In ‘Monarch Legacy of Monsters’, production presented younger scenes connected to Russell’s character and aligned them with present day material. The pipeline used de aging assisted by machine learning to harmonize facial features across action and dialogue shots. This preserved continuity between different time periods in the series.

The team also leveraged reference from extensive photography and scans to guide the training process. With those assets, the system could better reproduce specific facial characteristics and maintain consistency across episodes, which is essential in serialized storytelling.

Wyatt Russell

The same series used Wyatt Russell’s performance to help portray a younger version connected to Kurt Russell’s character. De aging and facial alignment tools learned from family resemblance and reference material to produce convincing matches in motion. This gave the narrative a smooth bridge between eras without changing actors mid scene.

Production used controlled lighting and camera tests to feed high quality data into the pipeline. With that preparation, machine learning models handled detailed tracking and surface reconstruction so the final images matched skin tone and facial structure across a range of expressions and camera distances.

Ashton Kutcher

Kutcher has discussed using AI video generation for quick previs, test shots, and low cost concept reels. By generating short scenes or location plates, a creative team can evaluate ideas before booking crews or renting stages. This use case treats AI as a planning tool that reduces waste while keeping full scale production for final photography.

He has also evaluated how text to video and image tools can generate variations for pitch decks. In that workflow, AI helps visualize wardrobe, props, and lighting ideas so departments can align early. The selected look then guides traditional builds once a project is greenlit.

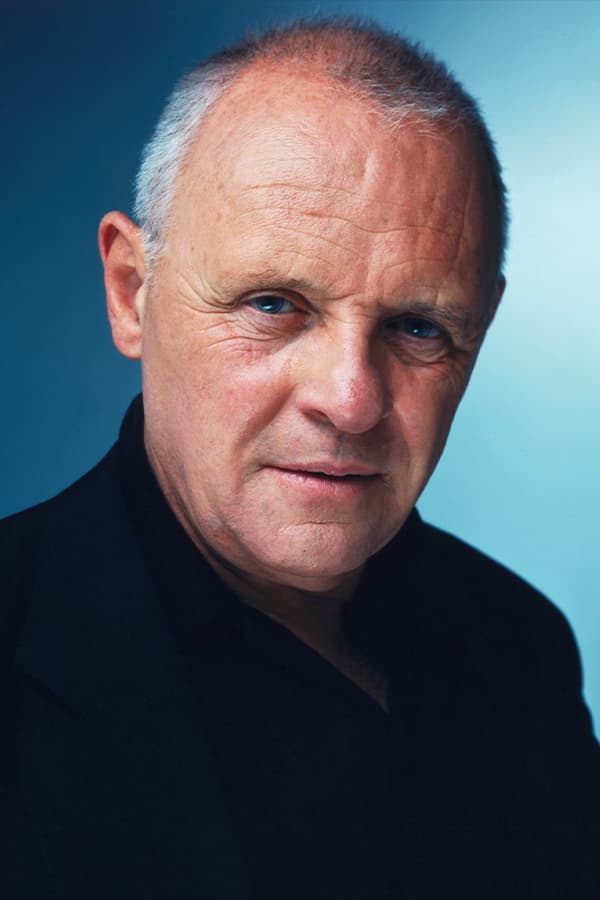

Anthony Hopkins

Hopkins collaborated on projects that use AI in visual art, where generative systems combine curated inputs with style rules to produce collections that reflect a defined motif. The actor provided creative direction and selected outputs for release, which showed how performers can engage with AI as another creative instrument.

He has also worked with music and voice technology that uses machine learning for composition support and tone shaping. These tools generate options that composers and engineers evaluate, which speeds up exploration while leaving final arrangement and performance in human hands.

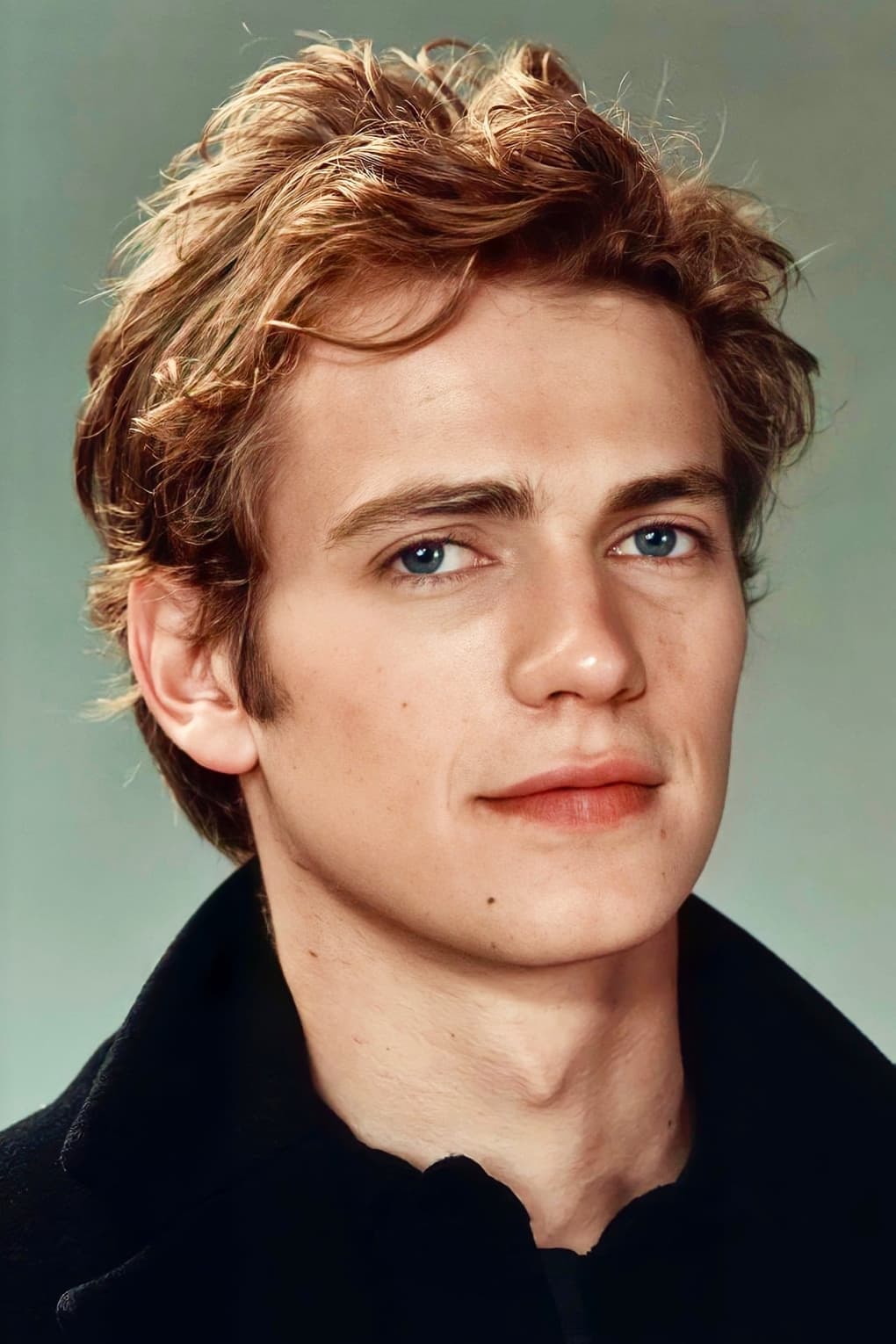

Hayden Christensen

When portraying Anakin Skywalker in ‘Obi Wan Kenobi’ and later in ‘Ahsoka’, the production used de aging and voice processing that benefited from machine learning. The goal was to align the character’s look and sound with specific points in the established timeline while keeping Christensen’s movement and delivery. This allowed new scenes to fit smoothly with earlier stories.

The workflow involved detailed reference gathers, from costume tests to facial scans, so models had accurate targets. Effects teams then blended these outputs with practical makeup and lighting to reach a cohesive image that held up in close ups and fast action moments.

Ewan McGregor

McGregor’s return as Obi Wan included sequences that required precise facial work and cleanup where machine learning assisted with detail restoration. These tools helped maintain continuity across scenes that referenced earlier eras while staying true to the performance captured on set. The process ensured the character looked consistent with audience memory.

Post teams used AI assisted roto and paint tools to accelerate complex composites, which kept schedules manageable for episodic delivery. The combination of planning, practical craft, and trained models supported a look that matched the franchise’s visual history without limiting what could be shot on location.

Share which examples you think are most promising for future productions in the comments.