Celebs Who Sued Over Deepfakes or AI Usage

Artificial intelligence has collided with fame in messy ways, and more public figures are taking their grievances to court. Some cases target explicit deepfakes of a person’s face or voice. Others challenge how AI companies trained their models on books or performances without permission. The legal claims vary, but the through line is control over identity, creative work, and how technology repurposes both.

Below are celebrities and estates who actually filed lawsuits over deepfakes or broader AI usage. You will see actions ranging from right of publicity and copyright claims to consumer protection arguments. Where there have been updates, you will also see what courts have done so far or how the disputes ended.

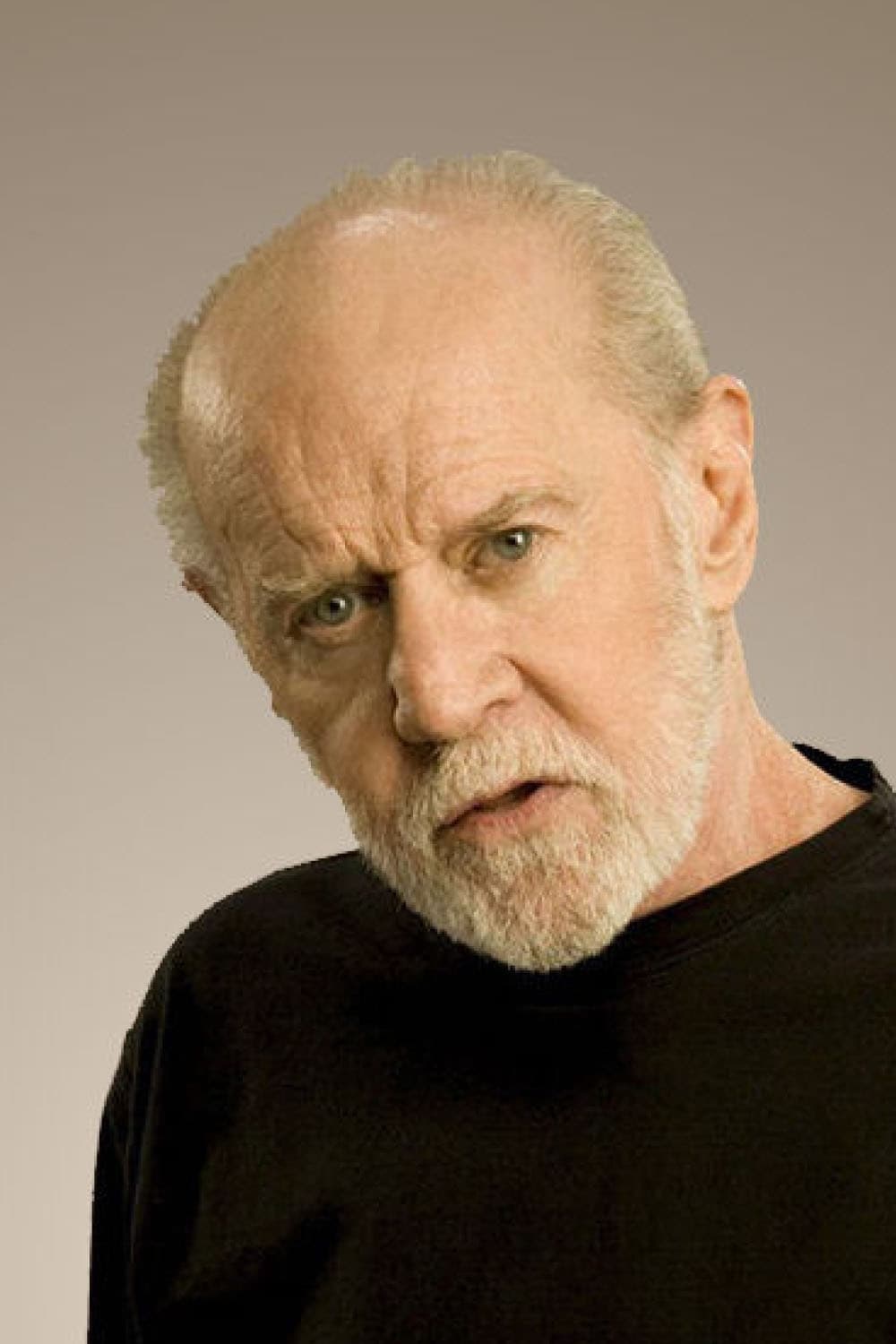

George Carlin’s Estate

The estate sued over an AI generated comedy special that copied the late comedian’s name voice and style. The filing argued that the project used recordings of his work to build a program that produced a longer routine in his voice without permission and that the title and promotion traded on his identity.

The case ended with removal of the material and written commitments not to use his name voice or likeness without consent. The settlement became an early reference point for how estates can stop synthetic imitations that rely on a deceased performer’s catalog.

Sarah Silverman

Silverman filed suits against AI developers alleging that her books were copied into training datasets without authorization. The complaints described large scale ingestion of full texts and set out how model outputs can echo protected expression from the underlying works.

She sought damages and court orders to restrict further use of her books in training. Parts of the litigation moved forward after early motions, keeping the core claims about unlicensed copying in play.

Richard Kadrey

Kadrey joined as a co plaintiff in author lawsuits that challenge the use of complete books to train large language models. The pleadings explained how training corpora were compiled and how outputs may reproduce distinctive passages or plot elements from his novels.

The suits ask for monetary relief and injunctive measures that would require changes to training practices. They also press for deletion of any stored copies of the books that remain inside developer systems.

Christopher Golden

Golden’s claims mirror the broader author actions that target unlicensed book ingestion during AI training. The complaints detail both the copying at the ingestion stage and the risks of output that competes with authorized works.

He and his co plaintiffs request damages and an injunction that would force developers to obtain licenses or stop using covered texts. They also seek to establish that wholesale copying of books for training is not fair use.

Mona Awad

Awad sued over the use of her novels in AI training. The filings describe how copies of complete books allegedly entered datasets and how the models can produce detailed summaries and text that track protected expression.

Her case asks the court to award damages and to order deletion of her works from training sets. It also seeks to bar future use without explicit permission.

Paul Tremblay

Tremblay filed alongside Awad with similar allegations that his books were copied in full to train commercial models. The complaints explain how outputs can reproduce specific narrative details that belong to the original works.

He seeks both damages and court orders that would prohibit further use of his novels in training. The requested relief includes the removal of existing copies and changes to developer practices going forward.

George R. R. Martin

Martin joined a coordinated author action against an AI developer that focuses on copying entire books to build models. The complaint sets out claims for copyright infringement and related violations tied to training and downstream outputs.

The suit asks for statutory and actual damages and for injunctions that would stop unlicensed use. It also seeks to require developers to implement safeguards that prevent reproduction of protected text.

John Grisham

Grisham is among the high profile novelists in the same coordinated action. The pleadings argue that large scale scraping and storage of books for training violates the exclusive rights of authors and harms paid licensing markets.

The requested remedies include damages and court oversight of any future training that involves books. The case also seeks deletion of copies and a bar on outputs that reproduce protected material.

Jodi Picoult

Picoult joined the author group with claims that her novels were ingested without consent. The complaints detail how datasets of books were assembled and how they were used to train commercial systems.

She is seeking damages and injunctions that would prevent continued use of her works. The filing also asks the court to require transparent disclosures about training data that includes books.

David Baldacci

Baldacci’s claims track the same theory that complete copying of books for training is unlawful. The litigation outlines how training outputs can serve as substitutes and how that undermines authorized derivatives and licensed summaries.

He seeks damages along with an order that prohibits developers from using his texts without a license. The case also calls for deletion of any stored copies that remain inside model infrastructure.

Jonathan Franzen

Franzen appears in the coordinated action with allegations that his novels entered training corpora without permission. The filings state that ingestion of full texts exceeds what copyright law allows and that outputs can reproduce protected prose.

The suit requests damages and forward looking relief that would force changes to training methods. It also seeks a clear judicial statement that wholesale book copying for training is not permitted.

Michael Chabon

Chabon filed a separate author lawsuit that targets similar training practices at a different developer. The complaint asserts that unlicensed ingestion of whole books violates copyright and fuels outputs that closely track the original works.

He seeks damages and an injunction that would bar the developer from using his books without permission. The case also asks for deletion of copies and for measures that prevent future reproduction of protected text.

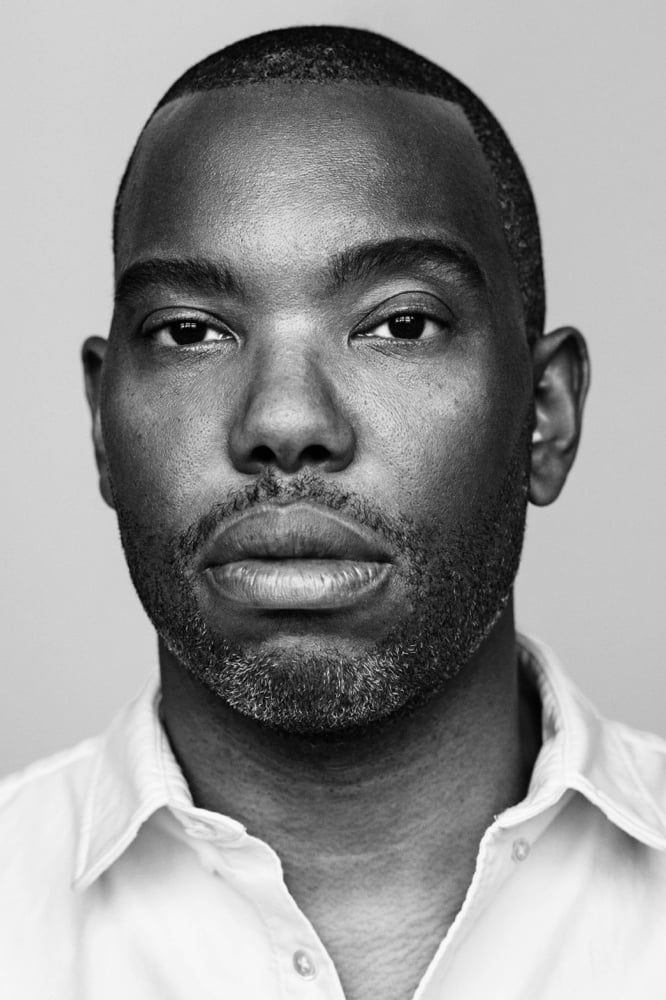

Ta-Nehisi Coates

Coates joined an author suit that alleges unlicensed copying of entire books for model training. The filings explain that the developer benefited commercially from the ingestion while authors received no compensation or control.

The requested relief includes damages and court orders that would require licenses or prohibit further use. The case also seeks removal of the books from any training or reference datasets.

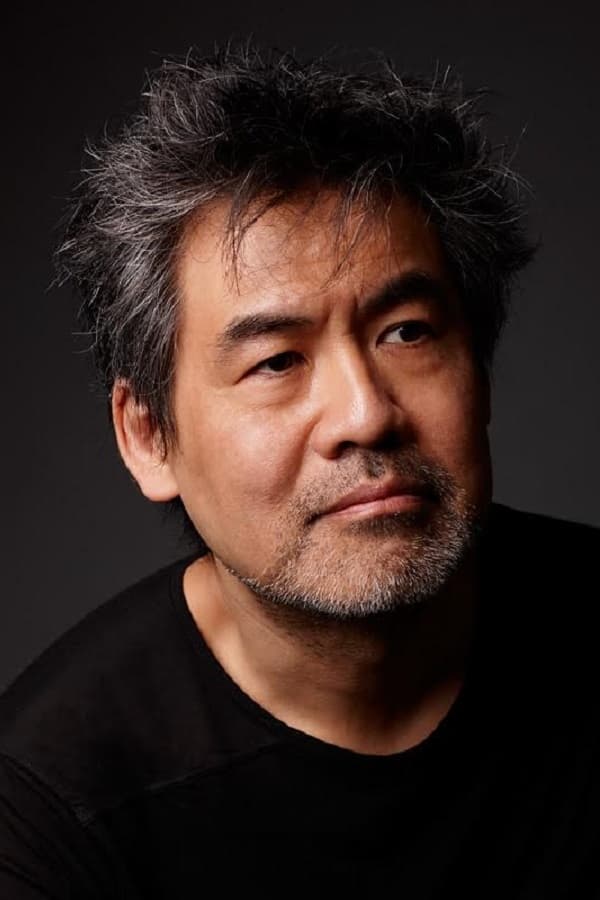

David Henry Hwang

Hwang sued over the alleged use of his works in training large language models without consent. The complaint points to the presence of complete texts in datasets and to outputs that can echo protected passages.

He asks for damages and for injunctive relief that would block continued use of his works. The case also requests deletion of any stored copies and affirmative safeguards against future reproduction.

Matthew Klam

Klam’s lawsuit focuses on copying of his books into training sets and the risk of output that tracks his protected prose. The pleadings describe the path by which complete texts entered developer corpora.

He seeks damages and court orders to remove his works from training pipelines. The filing also asks for changes to developer policies around sourcing and documenting training data.

Rachel Louise Snyder

Snyder’s complaint alleges that her books were copied at scale to train a commercial AI system without authorization. The pleadings set out how the developer stored and used the works as part of model building.

She seeks damages and an injunction to stop further use of her titles. The suit also requests deletion of copies and safeguards to prevent future reproduction of protected expression.

Ayelet Waldman

Waldman joined as a plaintiff with claims that her novels were included in unlicensed training corpora. The filing explains how ingestion of full length books gives developers commercial advantages without compensating authors.

She asks for monetary relief and for court orders that require licenses or bar continued use. The complaint also seeks removal of her works from any datasets tied to training.

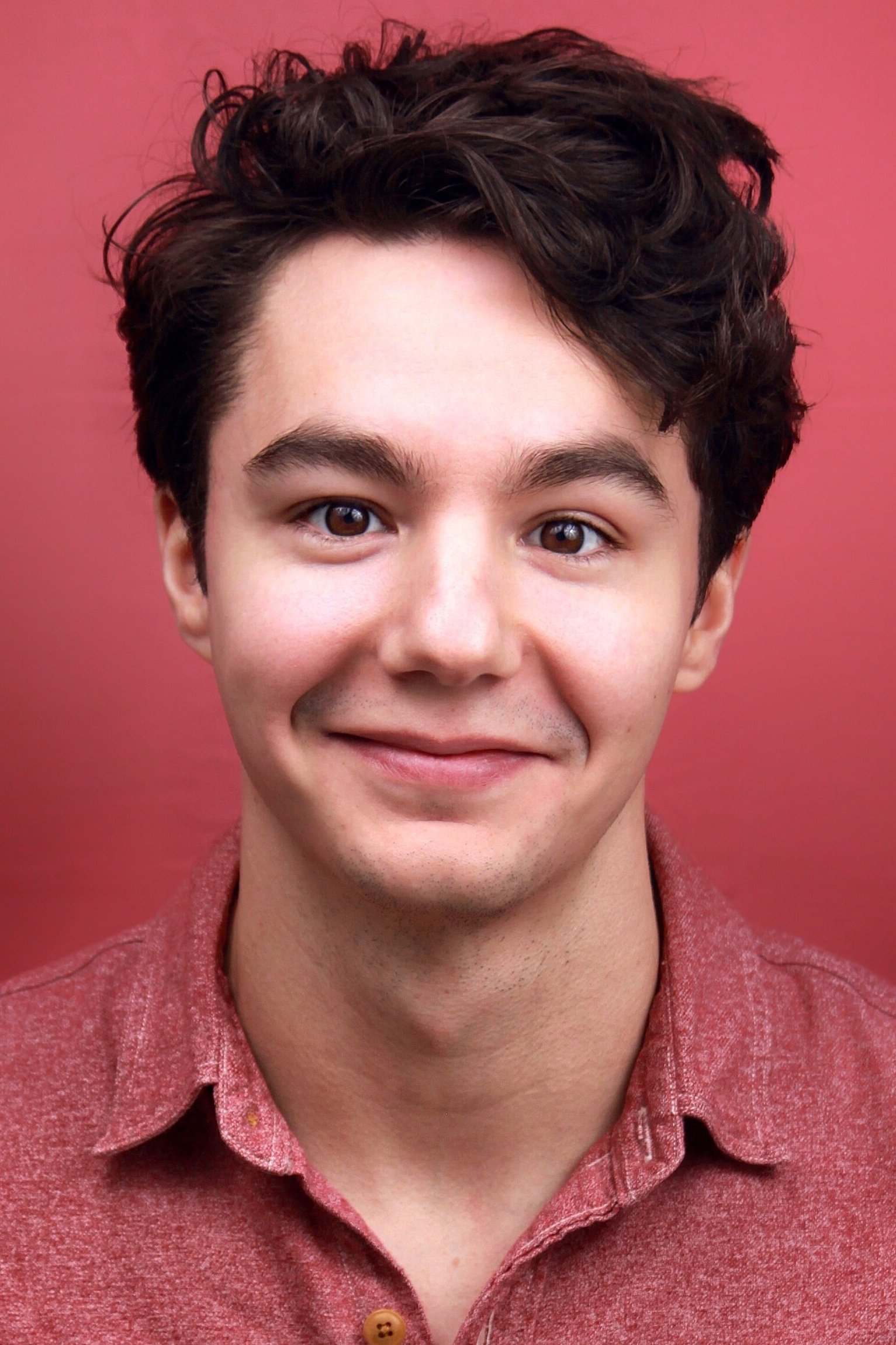

Paul Skye Lehrman

Lehrman sued a voice AI company after discovering that a synthetic voice matched his performance and was offered commercially. The complaint states that the company obtained recordings and created a clone that appeared in multiple projects without authorization.

He brings right of publicity and related claims and seeks damages and injunctive relief. The case argues that voice cloning for commercial use requires clear consent and a license from the performer.

Share who else you think belongs on this list and tell us what outcome you are watching most closely in the comments.